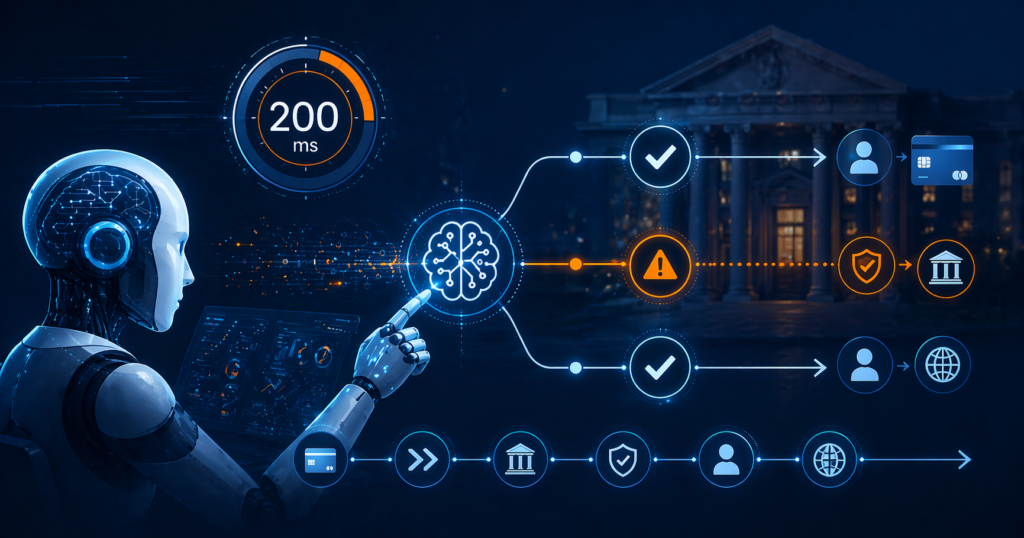

AI agents are now making payment authorization decisions in under 200 milliseconds, according to PYMNTS, fundamentally changing how money moves through the financial system. While the speed delivers competitive advantages in approval rates and revenue capture, it’s creating a compliance blind spot that mid-size banks haven’t fully grasped yet.

The shift from AI chatbots to what the industry calls “agentic AI” represents the first real test of whether financial institutions trust artificial intelligence with operational authority over money movement. Unlike earlier AI tools that simply responded to prompts, these systems can plan, reason, and execute multistep workflows across payment systems with minimal human intervention.

According to PYMNTS, 43% of chief financial officers expect agentic AI to have a high impact on dynamic budget reallocation based on real-time cost signals, with another 47% projecting a moderate impact. But this rapid adoption is happening faster than compliance frameworks can adapt, especially at mid-size institutions that lack the regulatory infrastructure of larger banks.

The Risk Nobody Is Talking About

The fundamental problem isn’t the AI decision-making itself—it’s the audit trail gap that occurs when decisions happen in microseconds across multiple systems. Traditional compliance documentation assumes human decision points that can be logged, reviewed, and explained to regulators. When an AI agent processes a payment authorization, applies risk scoring, and routes funds through multiple channels in 200 milliseconds, the standard audit trail breaks down.

Mid-size banks face the highest exposure here because they’re caught between two worlds. They need the competitive speed that AI agents provide to compete with fintech startups and larger institutions, but they don’t have the compliance infrastructure that major banks have built to handle algorithmic decision-making at scale.

Community banks and credit unions with assets between $1 billion and $50 billion are particularly vulnerable. They’re large enough that regulators expect sophisticated compliance systems, but small enough that they can’t dedicate entire teams to AI governance like JPMorgan Chase or Bank of America do.

The failure mode looks like this: An examiner asks to see the decision trail for a series of payments that were flagged in a subsequent investigation. The bank can show that the AI agent made the decisions correctly according to its programming, but can’t provide the step-by-step reasoning that regulators expect. The audit trail shows “AI decision: approved” with a timestamp, but lacks the granular documentation that would satisfy a BSA/AML examination.

How Thomson Reuters Analysis Changes the Compliance Game

A recent Thomson Reuters analysis published February 10th reveals how agentic AI workflows are reshaping anti-money laundering and know-your-customer investigations. The analysis shows that instead of analysts manually gathering data across sanctions lists, corporate registries, and adverse media databases, AI agents can autonomously collect, reconcile, and document findings while preserving an audit trail suitable for regulators.

But there’s a critical distinction here that many mid-size banks are missing. The Thomson Reuters framework works because it’s designed from the ground up with regulatory requirements in mind. The AI agents making 200-millisecond payment decisions often aren’t.

According to PYMNTS, nearly all financial services respondents in an Nvidia AI survey conducted in January plan to increase or maintain AI spending in 2026. This means more institutions are deploying agentic AI systems without necessarily building the compliance infrastructure first.

The key insight from Thomson Reuters is that agentic AI must deliver “traceable reasoning and regulator-ready documentation, especially in compliance use cases.” This isn’t happening automatically when banks implement AI agents for speed—it requires intentional design.

For compliance officers at mid-size banks, this means you can’t simply adopt the same AI payment systems that fintechs use. You need systems that are built with BSA/AML documentation requirements embedded from the start, not bolted on afterward.

The One Configuration Change Compliance Teams Need This Month

If your institution is already using or piloting AI agents for payment processing, there’s one immediate step that can prevent audit trail gaps: implement decision logging at the sub-process level.

Most AI payment systems log the final decision—approve or deny—along with a confidence score. But regulators need to see the intermediate steps: which data sources were consulted, how risk factors were weighted, and which rules triggered specific actions.

Here’s the specific configuration change: Enable verbose logging for all AI agent decisions that move money, with a minimum capture of five data points: input data sources, risk factors considered, rules applied, alternative options evaluated, and confidence thresholds met or missed.

This will slow down your 200-millisecond decisions slightly—possibly to 250 or 300 milliseconds—but keeps you well within competitive ranges while creating the audit trail that compliance needs. More importantly, it gives you documentation that can satisfy examiner requests without requiring extensive manual reconstruction.

For banks using third-party AI platforms, this means asking vendors specifically about “granular decision logging” capabilities before implementation. Many vendors can provide this functionality but don’t enable it by default because it increases data storage and processing overhead.

The second immediate action is to establish a monthly AI decision audit process. Don’t wait for an examination to test whether you can explain your AI agent’s reasoning. Pull a random sample of 50 AI-processed transactions each month and verify that you can produce examination-ready documentation for each decision path.

Common Mistakes Compliance Teams Make With AI Autonomous Money Movement

The most frequent mistake is treating AI agents like traditional automated clearing house systems. ACH systems follow predetermined rules that can be documented and explained. AI agents adapt their decision-making based on pattern recognition and machine learning, which creates different compliance obligations.

Another common error is assuming that because an AI system is more accurate than human processors, it automatically meets compliance requirements. Accuracy and auditability are separate issues. An AI agent might correctly identify 99.5% of suspicious transactions, but if it can’t explain its reasoning in regulatory language, it creates compliance risk.

Mid-size banks also frequently underestimate the data governance requirements. As Moody’s reported on January 16th, agentic AI in financial services must rely on proprietary, domain-specific data combined with explainable reasoning frameworks. This means you can’t simply plug AI agents into existing data silos—you need unified, governed datasets that support reasoning across structured and unstructured inputs while preserving traceability.

The final mistake is implementing AI agents without updating existing compliance policies. If your BSA/AML policy assumes human review for certain transaction types, but AI agents are now processing those transactions autonomously, you have a policy gap that examiners will identify.

Bottom Line for Community Bank CTOs

AI autonomous money movement isn’t optional—your competitors are already using it, and customer expectations around payment speed will force adoption. But you can’t implement these systems the same way fintechs do. You need AI agents that are designed with compliance documentation requirements built in, not added as an afterthought. The extra 50-100 milliseconds required for granular decision logging is a worthwhile trade-off for examination-ready audit trails.

Key Takeaways

- AI agents making 200-millisecond payment decisions create audit trail gaps because traditional compliance documentation can’t keep pace with algorithmic decision-making at microsecond speeds

- Mid-size banks face the highest ai autonomous money movement compliance risk because they need competitive speed but lack the regulatory infrastructure that major banks have built for algorithmic oversight

- Enable verbose logging for all AI money movement decisions, capturing at least five data points: input sources, risk factors, rules applied, alternatives evaluated, and confidence thresholds

The question isn’t whether AI agents will handle more of your payment processing—according to PYMNTS data, that’s already decided. The question is whether you’ll build compliance controls that can explain their decisions to regulators before your next examination.

Source: PYMNTS